Qt test system

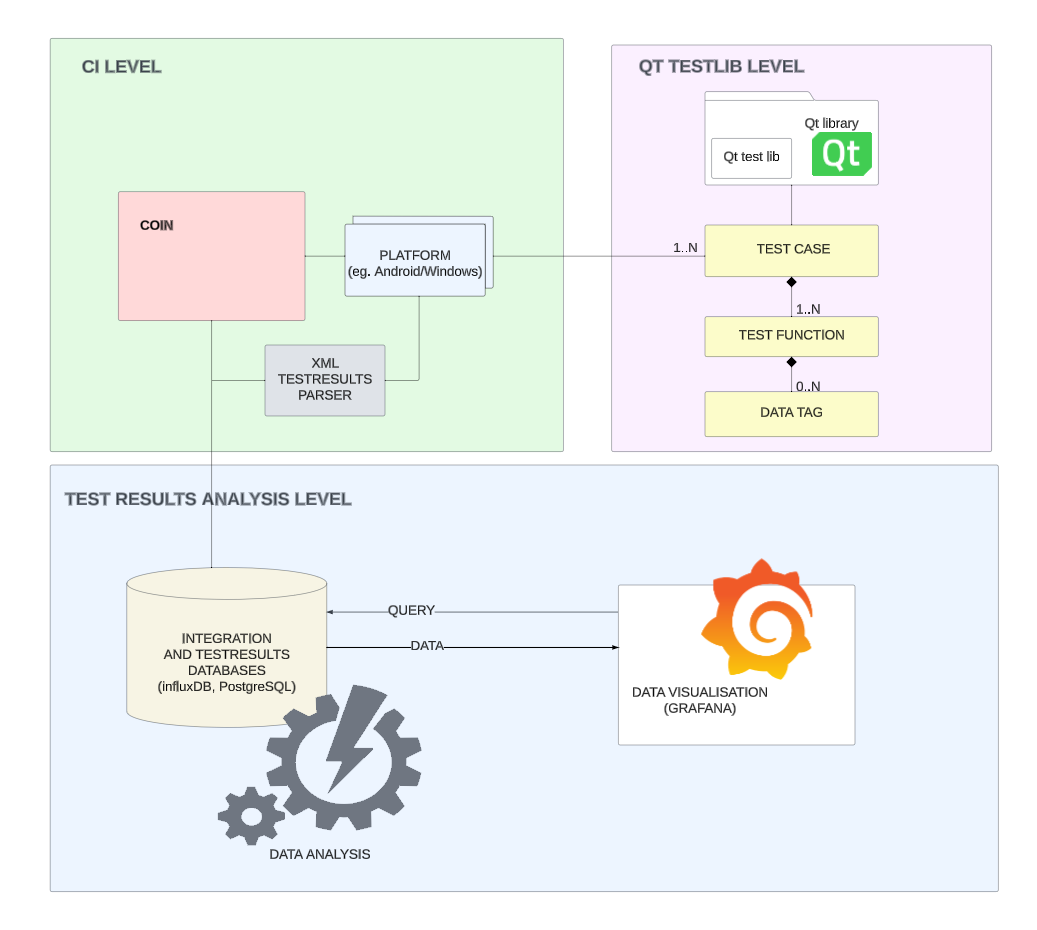

Test information for the Qt Project is collected on 3 levels:

1) Qt test library LEVEL

basic functionality

Qt test library provides fundamental testing functionality. Automatic tests need to include test lib.

After the finished run, the test should return one of the following results: PASS or FAIL, in special cases other values are possible see: XFAIL

crashes

Crashes happens when test executable do not provide result, or the output in a form of XML is corrupted. During test execution different errors/signals can occur - such as SEGFAULT, SIGBART and others, the root cause may lay in test source code or other parts of Qt library.

blacklisting

Additional functionality is blacklisting. Blacklisted tests are run, and provide results, but the result is ignored at the CI level. Note that those tests are still executed and thus consume resources, they return results (BPASS and BFAIL) and outcomes are stored in database.

Blacklisted tests however do not impact the success of integration. Blacklisting should be treated as a temporary solution - SHORT TERM quarantining of the test. More information about the blacklist format can be found here.

skipping

Some of tests are not supposed to be run on some platforms. Disabling the test on a particular platform should be achieved by using the QSKIP macro. The skipped test is not being executed and is not returning any results. See also: Select Appropriate Mechanisms to Exclude Tests

2) CI LEVEL

CI (coin or Continuous Integration system) automates the builds and manages test runs on different platforms. The platforms include widely known popular desktop operating systems such as macOS, Windows, or Ubuntu, but they can be also QNX or Qemu.

Regularly, Qt code undergoes testing on approximately 20 distinct operating systems (platforms), with this list dynamically changing. Given Qt's cross-platform nature, it allows the creation of tests on one platform and their execution on another, in most cases actual platform is virtualized (though there are exceptions, such as macOS). The automated testing across numerous virtualized platforms facilitates swift integration but introduces new challenges. One such challenge involves maintaining system stability and ensuring repeatable results. For various reasons, tests may fail, and the root cause of failure may not necessarily be tied to errors in the source code. Currently we are working on distinguishing between issues originating in the integration process (coin) and those linked to the test source code.

flakiness

Coin introduces flakiness classification of test. Each test that failed is repeated up to 5 times (see link). A test is classified as failed only if it fails 6 times in row (1st run plus 5 repetitions). Both failed and flaky test runs are stored in database. We collect history of such tests - for dev branch - information can be found on "Fast check" dashboard, for all other branches - on "Slow check" dashboard.

integration related crashes

In general tests that for various reasons do not produce results are classified as crashes. The term is not limited to signals ending a process, crashes can be caused by timeouts and other events. Some of the tests are not even run because of e.g. missing library. As mentioned earlier identifying and classifying crashes is an area on which we focus currently.

3) TEST RESULTS STATISTICS LEVEL

We store integration results in the database for about two years and regularly analyze them.

correlation between flakiness and failures

One of important conclusions is the high correlation between flakiness and failures. In simpler terms, if a test isn't very reliable, it will eventually fail and disrupt the integration process.

increasing number of blacklisted tests

Another observation is the increasing number of blacklisted tests. Tests are created on purpose and their goal is to make sure the implemented functionality works and is STILL WORKING as designed. By blacklisting we unwittingly create the possibility of introducing new bugs that would be otherwise easily caught by test system early on. In other words we create holes/vulnerabilities. That's why we should only use the blacklist as a last resort — a way to temporarily "quarantine" the test. Quarantine helps to identify root of flakiness - infrastructure related tests most likely will eventually start passing, once the environment will change (keep in mind running environment is has its fluctuations), in other cases - if after some time (more than 3 months) the test is still failing - it requires fixing (or replacing) - it should not stay blacklisted.

identifying the most impactful tests

Dealing with flakiness is an ongoing and sometimes challenging task. We're putting in consistent effort to ensure our tests maintain good quality. Our analysis pointed out which tests are causing the most trouble in the integration system. The most negatively impactful tests are presented on "Flaky summary" dashboard. More information on how we got those numbers is available here.

GLOSSARY and links for further reading:

COIN - Qt Continuous Integration System

GRAFANA - observability and visualization platform used to display test results and integration metrics

Qt test library documentation.

QSKIP macro functionality and documentation.

BLACKLIST file format

Guidelines to writing unit tests