Flakiness information: Difference between revisions

No edit summary |

No edit summary |

||

| (4 intermediate revisions by 2 users not shown) | |||

| Line 1: | Line 1: | ||

Make sure to also check the [[Qt test system|overview of the Qt test system]]. | |||

At the end of every week, we do some analytics and we prepare a list of the most failing flaky tests for a given week. | At the end of every week, we do some analytics and we prepare a list of the most failing flaky tests for a given week. | ||

| Line 5: | Line 5: | ||

Those lists are available on this flaky summary [https://testresults.qt.io/grafana/d/000000007/flaky-summary-ci-test-info-overview?orgId=1 dashboard]. | Those lists are available on this flaky summary [https://testresults.qt.io/grafana/d/000000007/flaky-summary-ci-test-info-overview?orgId=1 dashboard]. | ||

If you are having trouble reproducing a flaky autotest failure, there are some suggestions [[How to reproduce autotest fails|here]]. | |||

'''Why flakiness happen?''' | '''Why flakiness happen?''' | ||

| Line 13: | Line 13: | ||

So far we identified several sources of flakiness: | So far we identified several sources of flakiness: | ||

flakiness rooted in source code flakiness related | 1) flakiness rooted in source code flakiness possibly related a particular operating system version on which it is run. There can be multiple reasons for it, often related to the abstraction layer between mouse events and windowing system, timing or other code issues. | ||

2) flakiness related to virtualization - most of the runs are executed on virtual machines. Not all virtualization mimics well enough intended hardware, those shortcomings lead to flakiness related to running environment settings - some tests are sensitive e.g. to slower-than-usual memory. | |||

3) flakiness related to crashes. Test execution should return a result, however in some situation test ends prematurely. The test executable provides non-ok return codes, the output xml file is truncated. In such cases we assume the reason is a crash and we attribute it to the last executed function. In some cases more information can be found in logs - such as signals (SIGKILL, SIGBART), timeouts, or a stack call. The root of crash is not necessary the test executable - but also qt library or 3rd party libraries | |||

4) flakiness related to CI - continuous integration system | |||

'''Why reducing flakiness is important?''' | |||

Flaky tests are the reason for about 30% of failing test integrations, another group are crashes roughly 30% reasons of failure. They cause lots of frustration and deplete trust in tests because developers are not sure if tests fail for a reason, or because of instabilities. We rerun flaky tests up to 5 times - this rerun slows down integration and requires more power consumption. Failing workitems require re-running and that makes impact on the CI capacity. If the max CI capacity is reached it additionally causes timeouts and more failures - making it negative feedback and complex system Additionally qt project is growing and every year new automatic test are added and the code is build on new operating systems. This constant change requires constant customizing/maintaining of the automatic test to keep the flakiness low. In many project automatic tests source code has twice as many lines as actual code under test. Flakiness is observed in most software, not just Qt project code. | |||

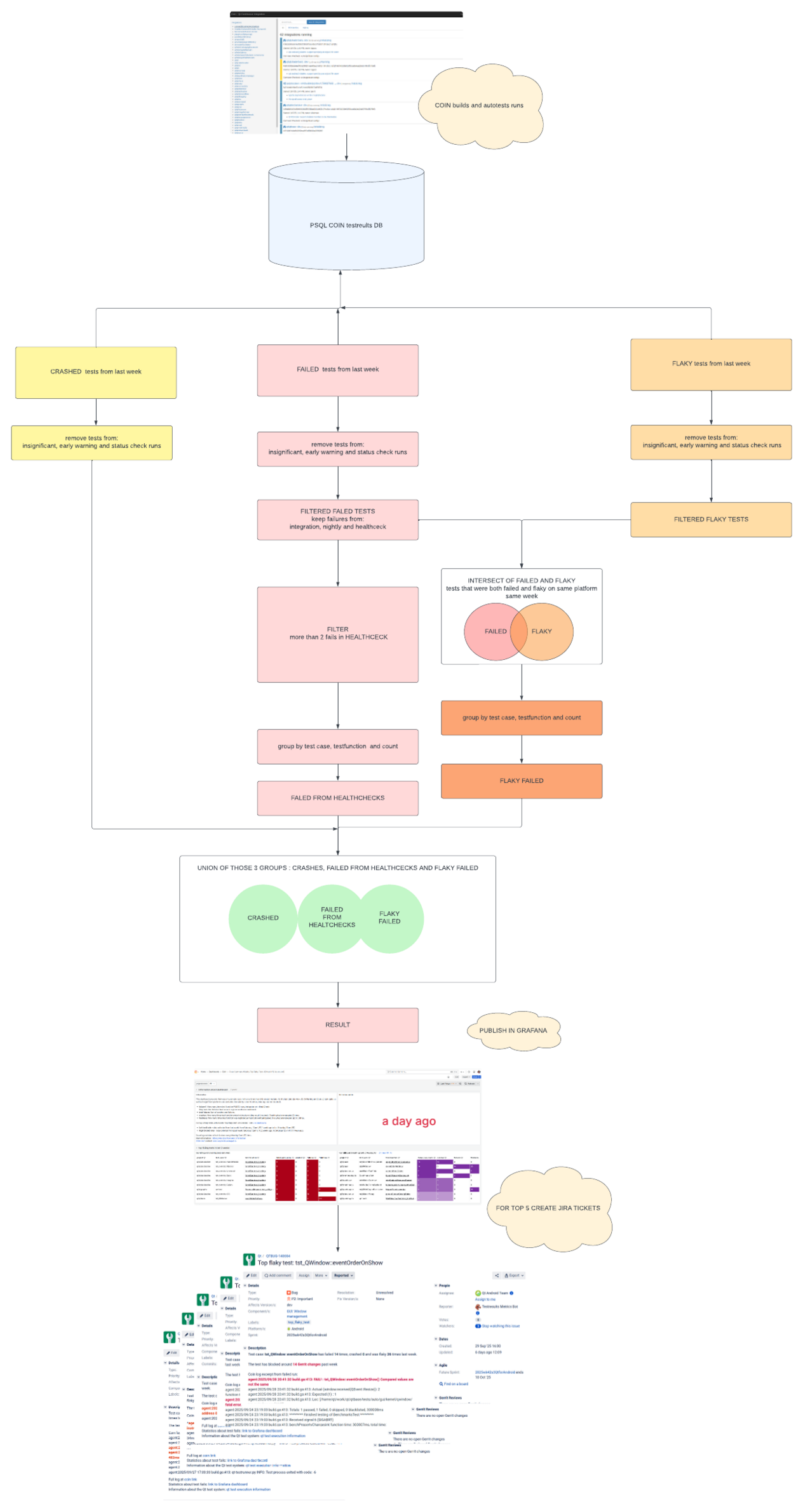

'''How did we get flaky and failed numbers?''' | '''How did we get flaky and failed numbers?''' | ||

| Line 26: | Line 30: | ||

Below simplified view is presented (scroll down), with information relevant to developers. Note, that the actual process is more complex and is not presented here. | Below simplified view is presented (scroll down), with information relevant to developers. Note, that the actual process is more complex and is not presented here. | ||

'''What are health-checks ? ''' | '''What are health-checks ? ''' | ||

| Line 36: | Line 38: | ||

Insignificant runs (work items) are defined for selected platforms. They are "allowed to fail", their fails do not impact (stop) integration. An insignificant platform run does not cause Gerrit stage to fail even if it fails. | Insignificant runs (work items) are defined for selected platforms. They are "allowed to fail", their fails do not impact (stop) integration. An insignificant platform run does not cause Gerrit stage to fail even if it fails. | ||

'''What is a platform?''' | '''What is a platform?''' | ||

Latest revision as of 16:45, 20 September 2025

Make sure to also check the overview of the Qt test system.

At the end of every week, we do some analytics and we prepare a list of the most failing flaky tests for a given week.

Those lists are available on this flaky summary dashboard.

If you are having trouble reproducing a flaky autotest failure, there are some suggestions here.

Why flakiness happen?

Flakiness is an umbrella term for tests and build instabilities. In an ideal scenario, if we run compiled code n times, it should provide exactly the same n results. In the real world, we receive different results, and the impact is particularly important when those are test results in integrations.

So far we identified several sources of flakiness:

1) flakiness rooted in source code flakiness possibly related a particular operating system version on which it is run. There can be multiple reasons for it, often related to the abstraction layer between mouse events and windowing system, timing or other code issues.

2) flakiness related to virtualization - most of the runs are executed on virtual machines. Not all virtualization mimics well enough intended hardware, those shortcomings lead to flakiness related to running environment settings - some tests are sensitive e.g. to slower-than-usual memory.

3) flakiness related to crashes. Test execution should return a result, however in some situation test ends prematurely. The test executable provides non-ok return codes, the output xml file is truncated. In such cases we assume the reason is a crash and we attribute it to the last executed function. In some cases more information can be found in logs - such as signals (SIGKILL, SIGBART), timeouts, or a stack call. The root of crash is not necessary the test executable - but also qt library or 3rd party libraries

4) flakiness related to CI - continuous integration system

Why reducing flakiness is important?

Flaky tests are the reason for about 30% of failing test integrations, another group are crashes roughly 30% reasons of failure. They cause lots of frustration and deplete trust in tests because developers are not sure if tests fail for a reason, or because of instabilities. We rerun flaky tests up to 5 times - this rerun slows down integration and requires more power consumption. Failing workitems require re-running and that makes impact on the CI capacity. If the max CI capacity is reached it additionally causes timeouts and more failures - making it negative feedback and complex system Additionally qt project is growing and every year new automatic test are added and the code is build on new operating systems. This constant change requires constant customizing/maintaining of the automatic test to keep the flakiness low. In many project automatic tests source code has twice as many lines as actual code under test. Flakiness is observed in most software, not just Qt project code.

How did we get flaky and failed numbers?

Each integration result run on CI (continuous integration system, coin) is stored in a database. We process stored information and display it in a tool called Grafana.

Below simplified view is presented (scroll down), with information relevant to developers. Note, that the actual process is more complex and is not presented here.

What are health-checks ?

To monitor the stability of CI successful qt5 integrations are re-run. The last successful qt5 integration (qt5 dev HEAD with all its submodules at the right revision according to the last submodule update). - is re-run at night to check how stable is CI. Those runs called "HEALTHCHECKS" were demonstrated to contain passing, working code and as such should pass. However, due to CI instabilities they often fail. We collect information about such fails and call it "CI flakiness" (CI instabilities) and we add it to flaky failed summary statistics

What are insignificant runs?

Insignificant runs (work items) are defined for selected platforms. They are "allowed to fail", their fails do not impact (stop) integration. An insignificant platform run does not cause Gerrit stage to fail even if it fails.

What is a platform?

Qt library is multi-module and cross-platform. Often, but not always qt modules are built on what we call host platform, but intended to run and test on what we call the target platform. In most cases, the platform is defined by the version of the operating system (e.g. Mac OS 12, or Ubuntu 20), processor architecture (e.g. Intel x86_32 bits or x86_64 bits, ARM 64, etc. ), and compiler (e.g. clang, gcc, msvc etc.) . Additionally, extra features can be defined, and a subset of tests can be run.

The diagram explaining visually the data process: