Coin glossary for Grafana users: Difference between revisions

reorganise task types paragraph |

config definition rephrasing |

||

| Line 52: | Line 52: | ||

'''Build platforms''' | '''Build platforms''' | ||

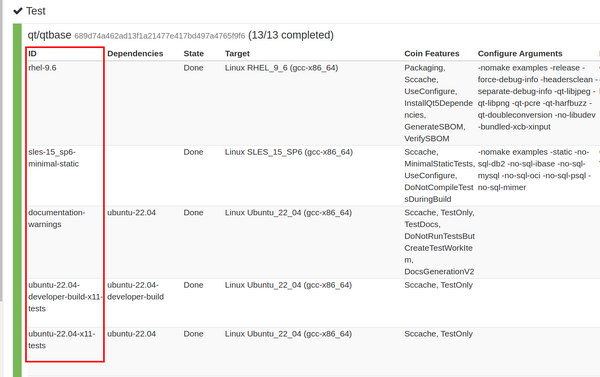

[[File:Config id.png|thumb|COIN web interface displaying different config ids for qt/qtbase test workitem: rhel-9.6, sles-15_sp6-minimal-static, documentation-warnings and other|600x600px]]Qt is a multi-module, cross-platform library. Qt modules are often—but not always—built on a '''host platform''' and then executed and tested on a '''target platform.''' In most cases, a platform is defined by a combination of: operating system and version (e.g. macOS 12, Ubuntu 20.04), processor architecture (e.g. x86, x86_64, ARM64) and compiler (e.g. Clang, GCC, MSVC). In Grafana, this information is exposed through fields such as host_os_version, target_os_version, host_arch, target_arch, host_compiler, and target_compiler. | [[File:Config id.png|thumb|COIN web interface displaying different config ids for qt/qtbase test workitem: rhel-9.6, sles-15_sp6-minimal-static, documentation-warnings and other|600x600px]]Qt is a multi-module, cross-platform library. Qt modules are often—but not always—built on a '''host platform''' and then executed and tested on a '''target platform.''' In most cases, a platform is defined by a combination of: operating system and version (e.g. macOS 12, Ubuntu 20.04), processor architecture (e.g. x86, x86_64, ARM64) and compiler (e.g. Clang, GCC, MSVC). In Grafana, this information is exposed through fields such as host_os_version, target_os_version, host_arch, target_arch, host_compiler, and target_compiler. Note that that some qt builds run on virtual or embedded platforms, and require emulator eg Android, VxWorks. | ||

COIN tracks platform details in even greater detail using so called '''configuration ids''' or '''configs'''. These identifiers describe in more detail concrete build and test environments, for example: ubuntu-22.04-developer-build, ubuntu-22.04-developer-build-x11-tests, ubuntu-24.04-arm64-developer-build-wayland-tests, ubuntu-24.04-arm64-developer-build and many many others. | COIN tracks platform details in even greater detail using so called '''configuration ids''' or '''configs'''. These identifiers describe in more detail concrete build and test environments, for example: ubuntu-22.04-developer-build, ubuntu-22.04-developer-build-x11-tests, ubuntu-24.04-arm64-developer-build-wayland-tests, ubuntu-24.04-arm64-developer-build and many many others. | ||

| Line 59: | Line 59: | ||

Config information is widely used in Grafana dashboards, enabling users to identify the platforms where a test is failing or to view statistics on platform stability. | Config information is widely used in Grafana dashboards, enabling users to identify the platforms where a test is failing or to view statistics on platform stability. | ||

Configs definition is scattered in ''coin/platform_configurations'' in product (module) repos for example:https://code.qt.io/cgit/qt/qt5.git/tree/coin/platform_configs/cmake_platforms.yaml | |||

Additional properties describing configs are called configure arguments such as: -no-pch, -verbose, -opengl-no-xcb, and are also stored in db and can be displayed on dashboards. | |||

Coin_features are parameters used to describe workitemes for example: Sccache,DisableTests, TestOnly, Debug and many many others. | Coin_features are parameters used to describe workitemes for example: Sccache,DisableTests, TestOnly, Debug and many many others. Features trigger for custom instructions mostly defined in qtbase.git/coin/ instructions. | ||

Notable features are that: | |||

'''Insignificant''' coin_feature relates to build and test workitems | '''Insignificant''' coin_feature relates to both build and test workitems. In case of build or test failure - the state is labeled “Insignificant” not “Failed” and they do not stop integration. | ||

'''InsignificantTests''' coin_features relates only to test workitems. | '''InsignificantTests''' coin_features relates only to test workitems. Tests will be executed and workitem will have status "Insignificant" instead of "Failed", in case of failure. | ||

'''DoNotAbortTestingOnFirstFailure:''' run all tests, instead of stopping/aborting after first failure | |||

'''WarningsAreErrors: '''Workitems builds will fail at warnings. | |||

Workitems also have their own unique identifiers (workitem ids), which are used in Grafana dashboards to construct URLs linking to the corresponding workitem logs. Note that cached workitems can be shared among multiple integrations to optimize performance. | Workitems also have their own unique identifiers (workitem ids), which are used in Grafana dashboards to construct URLs linking to the corresponding workitem logs. Note that cached workitems can be shared among multiple integrations to optimize performance. | ||

| Line 105: | Line 109: | ||

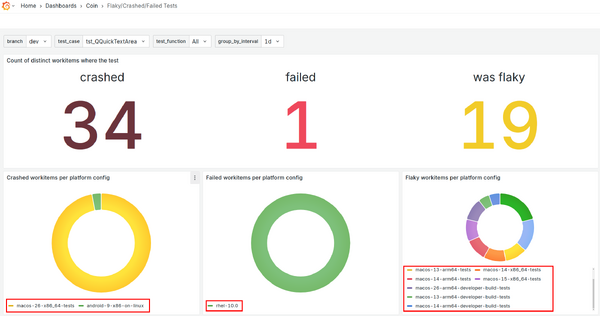

The difference between different categorization and interpretation of test results are widely explained elsewhere, below just a short reminder. Every test that fails is re-executed maximum 5 times. If during those re-runs test passes at least once - it is flagged as flaky. If the test consequently fails 5 times in a row it is flagged as failed. If the test function outcome cannot be resolved, eg xml is corrupted - its is flagged as crashed. Crashed tests are repeated once. | The difference between different categorization and interpretation of test results are widely explained elsewhere, below just a short reminder. Every test that fails is re-executed maximum 5 times. If during those re-runs test passes at least once - it is flagged as flaky. If the test consequently fails 5 times in a row it is flagged as failed. If the test function outcome cannot be resolved, eg output xml is corrupted - its is flagged as crashed. Crashed tests are repeated once. | ||

Special category are blacklisted tests. Blacklisted tests are run, and provide results, marked as BPASS or BFAIL but the result is ignored at the CI level - thus as such cannot fail workitem. | Special category are blacklisted tests. Blacklisted tests are run, and provide results, marked as BPASS or BFAIL but the result is ignored at the CI level - thus as such cannot fail workitem. | ||

Revision as of 09:52, 27 January 2026

This article explains the glossary related to COIN, the Qt Company Continuous Integration system, from a Grafana user’s perspective. It provides a basic understanding of COIN terminology that is helpful for correctly interpreting metrics, dashboards, and trends. The page presents a simplified view of COIN concepts as they appear in Grafana and intentionally omits internal implementation details. For deeper technical explanations, see the references listed in Further reading.

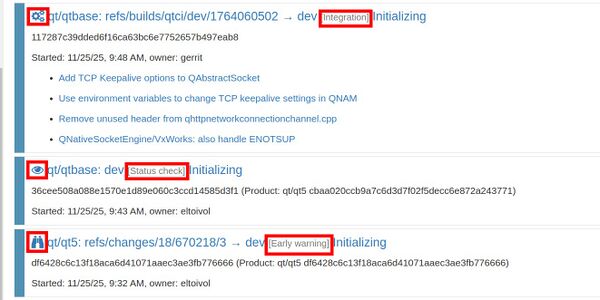

A task represents a single CI job scheduled either by a user or by agents. Every task has a specific type, such as:

- Integration - task that tries to integrate a change in Gerrit into a Qt submodule

- Early warning - pre-check scheduled from Gerrit

- Status check a job scheduled by a user directly on the COIN web interface

- Nightly and Healthchecks - special tasks run every night to test the latest versions of all Qt submodules. HealthChecks build and test the latest qt5.git and all submodules on their last submodule-updated SHA-1s

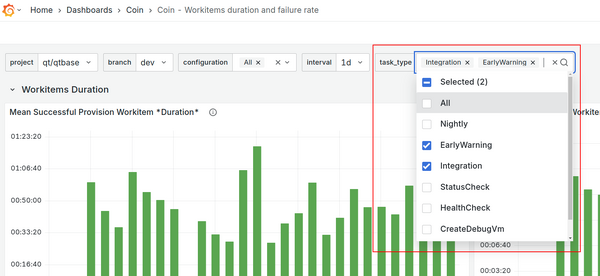

Task types are labeled in the COIN web interface, they have distinct icons, and the same categorization (for example, Integration vs. Status check) also appears in different tables across Grafana dashboards.

See related dashboards:

Things Gone Wrong in last HealthCheck dashboard

For most flaky test statistics, only test results from integrations tasks are typically considered.

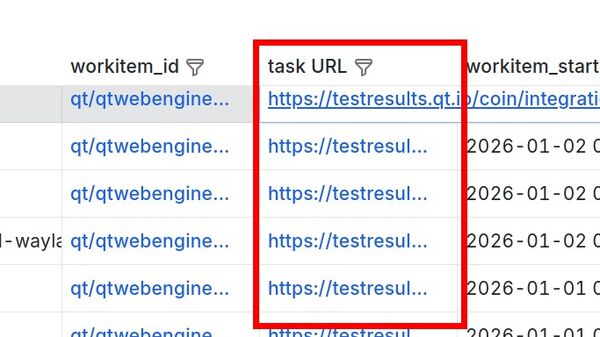

Each task has a unique task id, which serves as its identifier. This id can be used to construct a URL that shows the status and results of a COIN task, following the pattern:

https://testresults.qt.io/coin/integration/qt/{module_name}/tasks/{task_id}

for example:

https://testresults.qt.io/coin/integration/qt/qt5/tasks/nightly1767303902

Here, qt5 is the module_name and nightly1767303902 is the task_id.

Tasks URLs can be found in panels providing detailed information about test runs (see picture).

Workitems

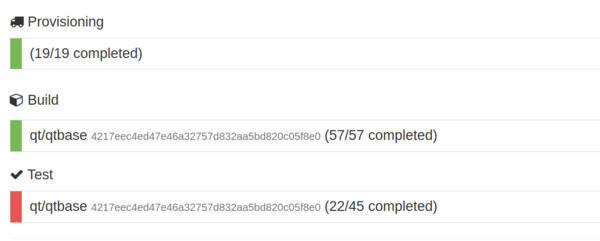

Each task contains several workitems, organized into three categories:

- Provision - create images building and testing

- Build - build libraries and tests

- Test - execute tests

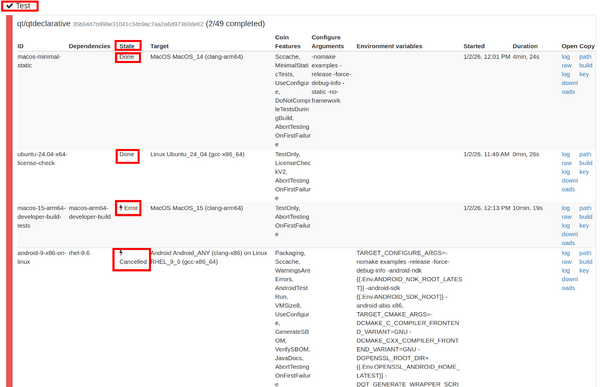

Workitem States

Each workitem has a state that describes its current status or final outcome. Possible states include:

- Done – completed successfully

- Running – currently in progress

- Cancelled –job was cancelled or failed before the workitem has completed

- Waiting for hardware

- Failed – encountered some sort of execution error

- Error – any failure outside of the VM running instructions

- Timeout

- Insignificant – workitem failed, but the result does not affect the overall task result

Build platforms

Qt is a multi-module, cross-platform library. Qt modules are often—but not always—built on a host platform and then executed and tested on a target platform. In most cases, a platform is defined by a combination of: operating system and version (e.g. macOS 12, Ubuntu 20.04), processor architecture (e.g. x86, x86_64, ARM64) and compiler (e.g. Clang, GCC, MSVC). In Grafana, this information is exposed through fields such as host_os_version, target_os_version, host_arch, target_arch, host_compiler, and target_compiler. Note that that some qt builds run on virtual or embedded platforms, and require emulator eg Android, VxWorks.

COIN tracks platform details in even greater detail using so called configuration ids or configs. These identifiers describe in more detail concrete build and test environments, for example: ubuntu-22.04-developer-build, ubuntu-22.04-developer-build-x11-tests, ubuntu-24.04-arm64-developer-build-wayland-tests, ubuntu-24.04-arm64-developer-build and many many others.

Config information is widely used in Grafana dashboards, enabling users to identify the platforms where a test is failing or to view statistics on platform stability.

Configs definition is scattered in coin/platform_configurations in product (module) repos for example:https://code.qt.io/cgit/qt/qt5.git/tree/coin/platform_configs/cmake_platforms.yaml

Additional properties describing configs are called configure arguments such as: -no-pch, -verbose, -opengl-no-xcb, and are also stored in db and can be displayed on dashboards.

Coin_features are parameters used to describe workitemes for example: Sccache,DisableTests, TestOnly, Debug and many many others. Features trigger for custom instructions mostly defined in qtbase.git/coin/ instructions.

Notable features are that:

Insignificant coin_feature relates to both build and test workitems. In case of build or test failure - the state is labeled “Insignificant” not “Failed” and they do not stop integration.

InsignificantTests coin_features relates only to test workitems. Tests will be executed and workitem will have status "Insignificant" instead of "Failed", in case of failure.

DoNotAbortTestingOnFirstFailure: run all tests, instead of stopping/aborting after first failure

WarningsAreErrors: Workitems builds will fail at warnings.

Workitems also have their own unique identifiers (workitem ids), which are used in Grafana dashboards to construct URLs linking to the corresponding workitem logs. Note that cached workitems can be shared among multiple integrations to optimize performance.

Additionally, task and workitem duration data is available. An example dashboard can be found at:

Coin - Workitems duration and failure rate dashboard

Additionally environment variables can also be displayed in Grafana.

Note that while the Qt test results Grafana dashboards are public, the underlying database and dashboard editing permissions are restricted to Qt Company internal use.

Both tasks and workitems have additional properties such as project and branch. The project (also referred to as a module) represents the codebase being built and tested within the CI system. Projects are not limited to Qt library modules; they may also refer to other components, such as the Qt Creator project. If a project has dependencies, those dependencies are automatically resolved and built as part of the task.For example, a qt/qtdeclarative task will include build workitems for its dependencies (such as qtbase and qtsvg), but only test workitems for qtdeclarative itself. Branch names depend on the project. In addition to regular Qt project branches, you may encounter temporary branches created by COIN agents or the cherry-pick agent.

Each task is also associated with a SHA-1 hash corresponding to a specific Gerrit change and patchset. This SHA-1 can be used, for example, to identify which Gerrit change introduced a regression and caused a test to start failing..

-

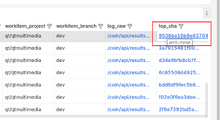

Table view in Grafana dashboard displaying SHA-1 property of task in which given test has failed

-

Same patchset SHA-1 visible in Gerrit

Automatic test results

Most of the statistics are concentrated about results of automatic tests runs. Following information can be easily obtained from dashboards: passed, failed, flaky, crashed, and skipped tests.

The test execution beside result information contains test case, test function and data tag if exists, as well as test execution duration and can be connected with given task, workitem, shortened log (log.txt) and full CTest.log.

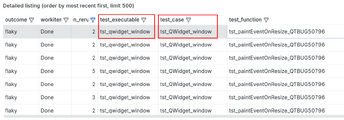

One of the confusing parts can be test case name - the database and Grafana stores and present test executable name and test case:

test_case - name of test case defined in source file, and present in content xml file eg: <TestCase name="tst_QMediaPlayerBackend">

test_executable - name of test binary defined in makefile, and name of the binary, name of result xml file, used in logs eg:

agent:2026/01/20 02:40:32 build.go:415: 48/64 Test #48: tst_qmediaplayerbackend ..........***Failed 317.73 sec

-

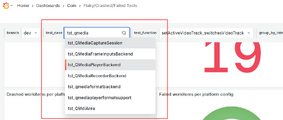

Test case used in Grafana drop down menu

-

Grafana table view listening test case name and executable

The difference between different categorization and interpretation of test results are widely explained elsewhere, below just a short reminder. Every test that fails is re-executed maximum 5 times. If during those re-runs test passes at least once - it is flagged as flaky. If the test consequently fails 5 times in a row it is flagged as failed. If the test function outcome cannot be resolved, eg output xml is corrupted - its is flagged as crashed. Crashed tests are repeated once.

Special category are blacklisted tests. Blacklisted tests are run, and provide results, marked as BPASS or BFAIL but the result is ignored at the CI level - thus as such cannot fail workitem.

Note that combinations of failures can happen - e.g. blacklisted test can still crash - and blacklisting does not protect from stopping integration in case of crash. Similarly during repetition of failed tests - a test can crash.

Further reading.

https://testresults.qt.io/coin/coininfo

https://testresults.qt.io/coin/doc/